AI Act adopted by European Parliament

Curious what the AI Act means for you? Use our online tool EU AI Act: Which rules apply to you?

Today's crucial vote: European Parliament approved the AI Act

Today, the European Parliament approved the Artificial Intelligence Act. The regulation, agreed in negotiations with the Member States in December 2023, was endorsed by the Members of the European Parliament with 523 votes in favour, 46 against and 49 abstentions.

Aim of the AI Act

It aims to protect fundamental rights, democracy, the rule of law and environmental sustainability from high-risk AI, while boosting innovation and establishing Europe as a leader in the field. The regulation establishes obligations for AI based on its potential risks and level of impact.

Risk-based approach

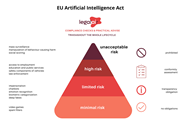

The EU AI Act has a risk-based approach that categorises AI applications into different levels of risk, determining the extent of regulatory oversight required.

The AI Act introduces a four-level framework to classify AI risks:

- Unacceptable Risk: banned AI practices, like social scoring by governments;

- High Risk: AI systems used in critical infrastructures, law enforcement, etc., requiring strict compliance obligations;

- Limited Risk: AI systems with specific transparency obligations, like chatbots; and

- Minimal or No Risk: AI applications such as video games or spam filters, which are largely unregulated

Some highlights

Banned AI Applications

- Biometric categorization based on sensitive characteristics

- Untargeted scraping of facial images for recognition databases

- Emotion recognition in workplaces and schools

- Social scoring and predictive policing based on profiling

- AI that manipulates behavior or exploits vulnerabilities

Law enforcement exemptions

- Principle ban on biometric identification systems, with narrow, strictly regulated exceptions

- Real-time use allowed under specific conditions, like searching for missing persons or preventing terrorist attacks, with prior authorization

- Post-event use linked to criminal offences and requires judicial authorization

High-risk AI systems obligations

Systems in critical infrastructure, education, employment, public services, law enforcement, and democracy must assess and reduce risks, maintain logs, be transparent, and ensure human oversight. The AI Act also introduces the right for citizens to submit complaints and receive explanations about AI decisions affecting their rights.

Transparency requirements

General-purpose AI systems must comply with EU copyright law, publish training data summaries, and for powerful models, perform risk assessments and incident reporting. With exceptions to Open Source AI. Furthermore, deepfakes must be labeled clearly.

Innovation and SME support

Establishment of regulatory sandboxes and real-world testing environments at the national level, accessible to SMEs and startups for AI development and training.

Timeline

The regulation still needs a linguist check and needs to be formally endorsed by the Council. It will enter into force twenty days after its publication in the official Journal, and be fully applicable 24 months after its entry into force, except for:

- bans on prohibited practises (6 months after the entry into force);

- codes of practise (9 months after entry into force);

- general-purpose AI rules including governance (12 months after entry into force); and

- obligations for high-risk systems (36 months after entry into force).

Questions? We can help!

We can help you with tackling the AI Act by:

- Explaining the text in clear and understandable terms;

- Assessing the compliance and possible risks of your AI system;

- Drafting an AI policy for your business;

- Helping you prepare for an AI audit;

- Conducting a Human Rights and Algorithm Impact Assessment (IAMA); and

- Helping you establish whether your AI system is high risk (click here to check it out for yourself).

For more information on the AI Act or on our services, please contact Jos van der Wijst (wijst@bg.legal).